About four months ago, I set out to fix a looming technology problem at home, having no solid backup strategy. After some arguably un-scientific research, I came up with a solution which has given me a decent amount of the ever-sought-after piece-of-mind. It looked something like this:

- I subscribed to JungleDisk using Amazon S3 storage. It backed up about 30Gb of data (in about 10 days! ouch!) and then ran in the early morning to keep itself in sync.

- I set up an rdiff-backup script to mirror the important stuff to an external USB drive.

- I created a subversion repository for all of my installers, tools, installation CD iso images (which I created using MagicISO)

- I copied my entire media library onto a cheap, huge external SATA drive and brought it to work, which is a more secure location that my house.

- I mirrored my svn repository onto that external drive as well and set up a batch script to update it weekly.

All in all, this was a really good first effort. I was happy and felt secure, though I haven't once had the need to use this wonderful system. I have, however, found some parts of it to be annoying. First of all, JungleDisk is SLOW. Really, really slow. Well, ok, it's only slow to upload. It took TEN days to upload about 30Gb of data, which is about 34kb/sec. As a test, I did some uploads to servers around the country (I have friends in fun places), and averaged about 100kb/sec, so in my opinion either Amazon throttles their incoming bandwidth, which I can understand, or my route to S3 stinks. In addition, it slows my computer down when it runs. I feel like that's really out of the question for a modern application.

These things are annoying, but they aren't a hill to die on. What was really bothering me was the cost. I had estimated that it would cost about the same each month to use S3 as it does to use mozy.com or other similar options. I was wrong! It costs $4.95 each month for mozy, and about $7 each month for S3 with my usage. It's not a huge difference, but it is annoying.

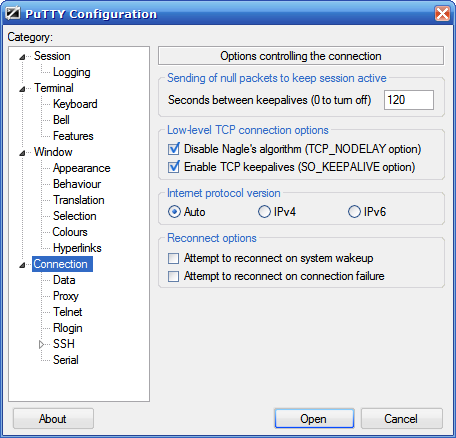

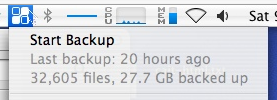

Back when I was looking at online storage, I tried mozy seriously and found the biggest weakness to be their Mac client, which I failed to note at the time was just freshly out of private beta. On a whim, I tried it again, still in beta but a tad newer. It still does that thing every time you start it up where it tries to scan your probable backup sets even though I don't want it to. It does not, however, keep crashing and it is, to my delight, a LOT faster. I got my averaged upload speed when I let it run untethered. And, even when I did that, my mac barely noticed it was doing anything major with its network connection. The client lets you throttle the bandwidth use during a specific time range, so I turned it down to about 48kb/sec when home in the evenings so that the wife doesn't notice that it's chugging away. (She definitely noticed with JungleDisk.. low WAF to be sure!) It, therefore, uploaded the same 30Gb of data in under FOUR days. I even got to tail its log file and watch it upload each file. Exciting stuff, I tell you.

BUT, to complicate matters in the mean time, I had started using svn to store my digital images as well, so all of those lovely .svn directories were lying around, and there was no smart way to tell mozy to leave them alone. So, I devised a fairly straightforward workaround: I modified my rdiff-backup job to ignore these files and populated my mozy backup set with the rdiff'd backup set instead. I stagger the cron and mozy jobs to keep everything in sync, up to date and backed up. It works quite well, and I never have to look at it. Ever.

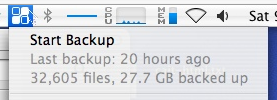

I do, of course, look at it every single day because I'm paranoid. I'd love to say I'll start trusting it to just keep working, but to be honest, I like knowing for certain that my data are safe in the event of a flood, fire, EMP, etc.

I also installed the windows client at work under a different e-mail account (I only want the 2Gb free service and you can't use both with the same account) and use it to back up my work documents. We use Acronis at work, but also use PGP whole-disk encryption and I just don't trust that my drive won't some day get itself all corrupted in a tizzy. Acronis fails semi-randomly and so far mozy doesn't, so I'm not going to take any chances with stuff that's important because, well, that's the whole point. Besides, mozy's windows client includes an awesome mapped drive that lets me browse right into my recent backup and grab files as needed. Seriously, when they add that feature to the mac client my backup life will be complete. That was, actually, one of the only things I really liked about JungleDisk.

So, in the end, I only changed direction slightly. I have yet to do any of the other things I needed to do for extra security, though I did lock my mac to my radiator pipes when I went away for two weeks. It made prudent sense at the time, though when I think about it now, it seems silly.